Abstract

The minimum audible angle has been studied with a stationary listener and a stationary or a moving sound source. The study at hand focuses on a scenario where the angle is induced by listener self-translation in relation to a stationary sound source. First, the classic stationary listener minimum audible angle experiment is replicated using a headphone-based reproduction system. This experiment confirms that the reproduction system is able to produce a localization cue resolution comparable to loudspeaker reproduction. Next, the self-translation minimum audible angle is shown to be 3.3° in the horizontal plane in front of the listener.

The minimum audible angle (MAA) in azimuth for a stationary listener and a sound source has been established in multiple studies to be approximately 1 in the frontal listening area and to degrade gradually moving away from the median plane. In these studies, the test participant is typically seated in an anechoic chamber, and their movement is physically limited or otherwise discouraged. The sound event is fixed to a stationary loudspeaker, or in some studies to a moving boom to study the minimum audible movement angles (MAMA), where a single sound source is dynamically moved across space at a certain distance.

**Figure 1:** Listening test setup to determine the minimum audible movement angles (MAMA), where the seated listener has to discern a moving from a stationary source.

**Figure 1:** Listening test setup to determine the minimum audible movement angles (MAMA), where the seated listener has to discern a moving from a stationary source.In contrast to MAA and MAMA studies, a natural way for humans to observe the world is an active process where motor functions support sensory information processing. Dynamic cues resulting from head rotation have been shown to resolve front-back confusions in binaural sound reproduction. Further dynamic cues due to listener translation in a sound field are less studied, but some results are presented stating that the dynamic setting eases the requirements for having individualized head-related transfer functions (HRTFs) in binaural audio reproduction.

This study explores the absolute perception of sound event stationarity in a dynamic six-degrees-of-freedom (DoF) setting where the listener rotates their head around three axes and self-translates across a three-dimensional space. The translational movement is typically encountered in everyday life, but it is also increasingly important for virtual and augmented reality audio as room-scale tracking becomes commonplace. The goal is to estimate a self-translation minimum audible angle (ST-MAA) and to compare this to a source-translation induced minimum audible movement angle, where the dynamic binaural cues elicited by the two types of translation are identical.

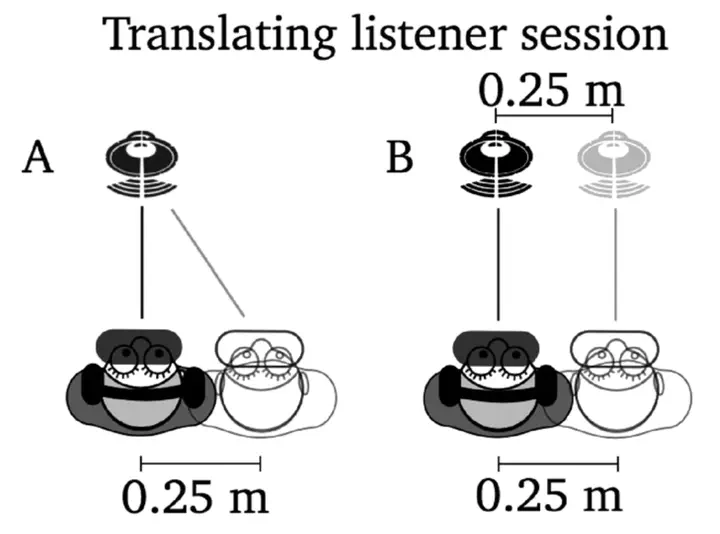

**Figure 2:** Listening test setup to determine the self-translation minimum audible angle (ST-MAA), where the translating listener has to discern a relatively moving from a relatively stationary source.

**Figure 2:** Listening test setup to determine the self-translation minimum audible angle (ST-MAA), where the translating listener has to discern a relatively moving from a relatively stationary source.The two contrasting sessions (translating listener and translating source) produced similar audio signals to the ear canals, with the only difference being the participant self-translation or the lack thereof. Each participant’s correct answers were counted for each distance in the two sessions and the probability to find the target sound event was modeled by a Weibull-distribution.

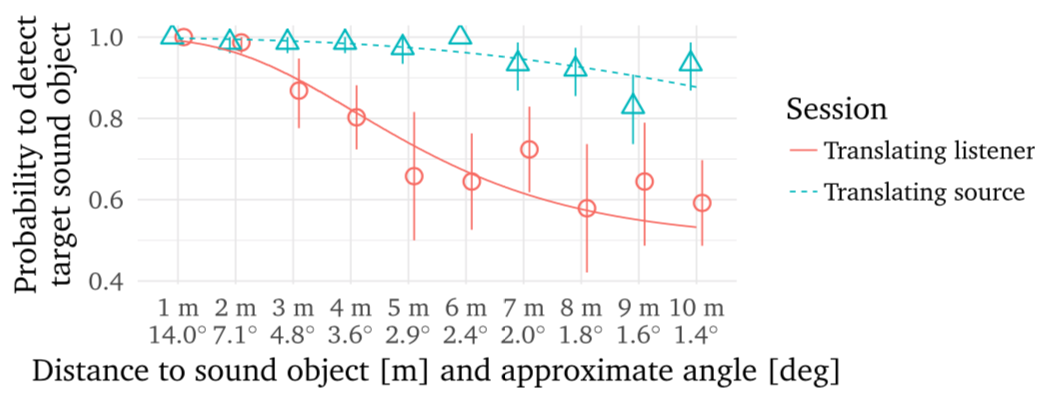

**Figure 3:** Psychometric functions for translating source and translating listener sessions modeled according to the Weibull-distribution. The data points are the average of each participant’s average of four trials at each distance for the $\pm0.25$ m lateral translation range. The whiskers denote the 95% confidence interval of the mean.

**Figure 3:** Psychometric functions for translating source and translating listener sessions modeled according to the Weibull-distribution. The data points are the average of each participant’s average of four trials at each distance for the $\pm0.25$ m lateral translation range. The whiskers denote the 95% confidence interval of the mean.Based on the results presented here, self-translation appears to impair absolute judgment of stationarity of sound events. Humans are shown to accept highly unnatural visual cues about spatial dimensions as long as they are consistent with self-translation. A similar mechanism may be at play in the auditory system, ignoring noisy sensory data when self-translation cues are strongly in favor of a specific interpretation. Potentially the proprioceptive and vestibular information for small head rotations are more informative than the somatosensory cues available for whole body translational movement. This conflict may contribute towards the reduced capability to discriminate the conditions.